Time Complexity & Calculations | All You should know

Time Complexity & Calculations

Time complexity measures the time taken to run each statement of code in an algorithm.

Space and Time complexity can define the effectiveness of an algorithm.

💡 Time complexity depends on the number of inputs passed to that algorithm. And, there is a relation between the input data size (n) and the number of operations performed (N) with respect to time.

💡 This relation between input data size and the number of operations is denoted as Order of growth and represented as O[n] where O is the order of growth and n is the length of the input. It is also called ‘Big O Notation’.

✔ Why need of time complexity?

Clearly Understand that a problem can be solved in more than one way. We need to learn how to compare the performance of different algorithms and choose the best one to solve a particular problem. While analyzing an algorithm, we mostly consider time complexity and space complexity.

NOTE:

✔ Time Complexity of algorithm/code is not equal to the actual time (in seconds or milliseconds) required to execute a particular code but the number of times a statement executes.

For exact same code and the same number of lines, we can get different timings on the different machines (hardware, operating system, processors, etc.).

So, we can say that the actual time requires to run code is machine-dependent and network load.

We can also get the same timings on the same machine for the same code, the reason behind that is the current network load.

function printHello() {

console.log('Hello');

}

In the above code “Hello” will print only once on Console. So, here time complexity is constant: O(1) i.e. every time the constant amount of time is required to execute code, no matter which operating system or which machine configurations you are using.

💻 In JavaScript, we can calculate the number of execution by a console method.

console.count('number of executuion');

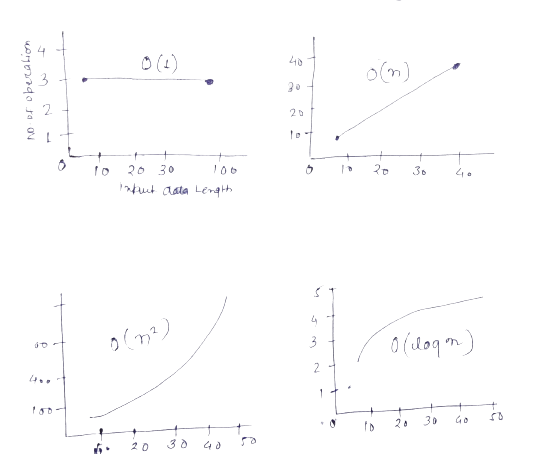

💡 There are different types of time complexities used, let’s see one by one:

- Constant time – O (1)

- Linear time – O (n)

- Logarithmic time – O (log n)

- Linearithmic Time O(n log n)

- Quadratic time – O (n^2)

- Cubic time – O (n^3)

- Exponential Time – O(2^n)

- Factorial Time – O(n!)

- Quasilinear time

Constant time – O (1)

When An algorithm is not dependent on the input size n. Irrespective of the input size n, the runtime will always be the same.

Linear time – O(n)

When an algorithm’s running time increases linearly with the length of the input. It is known as Linear Time complexity.

Logarithmic time – O (log n)

An algorithm is said to have a logarithmic time complexity when it reduces the size of the input data in each step. The number of operations gets reduced as the input size increases.

A most used example of log(n) is binary trees or binary search functions.

Quadratic time – O (n^2)

Generally, nested loops come under this time complexity order where for one loop takes O(n) and if the function involves a loop within a loop, then it goes for O(n)*O(n) = O(n^2) order.

💡 We use different notations to describe the limiting behavior of a function.

O-notation:

To denote asymptotic upper bound, we use -notation. It is called Big-O notation.

Ω-notation:

To denote asymptotic lower bound, we use -notation. It is called Big Omega notation.

Θ-notation:

To denote asymptotic tight bound, we use -notation. It is called Big Theta notation.

💡 Highlight:

While analyzing an algorithm, we mostly consider O-notation because it will give us an upper limit of the execution time i.e. the execution time in the worst case.

💡 Calculation –

To compute O-notation we will ignore the lower order terms since the lower order terms are relatively insignificant for large input.

T = 3 * n^2 + 4 * n + 7;

O(n) = n^2. we ignore relative small constants here because it does not have more impact with a very large amount of inputs.

Constant time –

The Below algorithm returns the last element of the given aray. Here no matter how many inputs are there, It will always run in O(1) time.

const arr = [1, 2, 3, 4, 5, 6, 7, 8, 9];

// Return last element from array

function getElement(arr) {

console.count('no. of execution');

return arr[arr.length - 1];

}

getElement(arr);

no. of execution: 1

9

Linear Search Example –

The below program will print every value of the given array and this will execute in O(n) time.

const arr = [1, 2, 3, 4, 5, 6, 7, 8, 9];

for (let i = 0; i < arr.length; i++) {

console.count('no. of execution');

console.log(i);

}

no. of execution: 1

0

no. of execution: 2

1

no. of execution: 3

2

no. of execution: 4

3

no. of execution: 5

4

no. of execution: 6

5

no. of execution: 7

6

no. of execution: 8

7

no. of execution: 9

8

Quadratic Time –

There is an array of three elements. Return all pairs that can be generated by these three items. The below Algorithm contains two nested for loop that’s why in worst-case time complexity is O(n^2)

const arr = ['a', 'b', 'c'];

function getCombinedElement(arr) {

const output = [];

for (let i = 0; i < arr.length; i++) {

console.count('execution');

for (let j = 0; j < arr.length; j++) {

console.count('execution');

output.push(arr[i] + arr[j]);

}

}

return output;

}

getCombinedElement(arr);

execution: 12

(9) ["aa", "ab", "ac", "ba", "bb", "bc", "ca", "cb", "cc"]

getCombinedElement(['a', 'b', 'c', 'd']);

execution: 20

(16) ["aa", "ab", "ac", "ad", "ba", "bb", "bc", "bd", "ca", "cb", "cc", "cd", "da", "db", "dc", "dd"]

Logarithmic time –

const binarySearch = function (arr, target) {

let left = 0;

let right = arr.length - 1;

while (left <= right) {

console.count('execution')

const midIndex = Math.floor((left + right) / 2);

const mid = arr[midIndex];

if (target === mid) {

return mid;

} else if (target > mid) {

left = midIndex + 1;

} else {

right = midIndex - 1;

}

}

return -1;

};

binarySearch([1,2,3,4,5,6,7,8], 8);

execution: 1

execution: 2

execution: 3

execution: 4

8

T = log2(8) = 3 (very near to 4)

binarySearch([1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16], 15);

execution: 1

execution: 2

execution: 3

execution: 4

T = 2^4 = 16

T = log2(16) = 4

O(log n) – This generally happens in Binary Search Algorithm.

O(n log n) – Linearithmic Time Complexity. One example of an algorithm that runs with this time complexity is Quick Sort, Heap Sort, Merge Sort.

Time Complexity & Calculations

✔ JavaScript Rotate 2D matrix 90 degrees clockwise | Top Interview Question

✔ HashTable Data Structure in JavaScript with Add Delete & Search Algorithms

✔ Trie Data Structure – Insert Search Delete & Print Program with JavaScript

✔ Top JavaScript Commonly asked Algorithms in Interview

✔ JavaScript String Permutation Program with nice explanation

Time Complexity & Calculations